What Is AI in Digital Marketing? Use Cases, Benefits, Challenges, and Tools

TL;DR

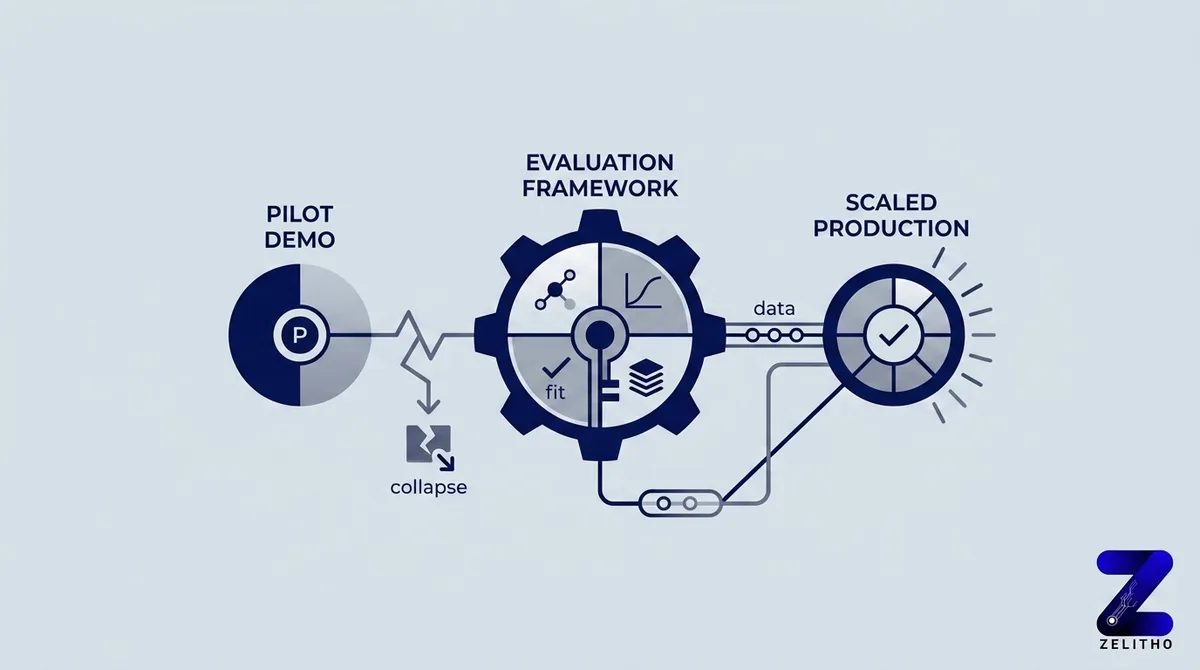

You ran a pilot. The vendor demo looked clean. Your team trained on the tool. Three months later, usage sits at 12% and the ROI slide has gone quiet.

Most AI marketing content lists benefits without addressing why implementations collapse between proof of concept and production. That gap is not a technology problem. It is a fit, data, and adoption problem that vendor content is structurally motivated to ignore.

This article runs four documented failure modes through a decision framework called the Fit-First Framework. Each failure mode carries a measurable cost. Each section gives senior marketers, founders, and and agency owners a concrete signal to test against their own operation before committing budget to a tool that may not clear their conditions for success.

What percentage of AI marketing initiatives actually succeed?

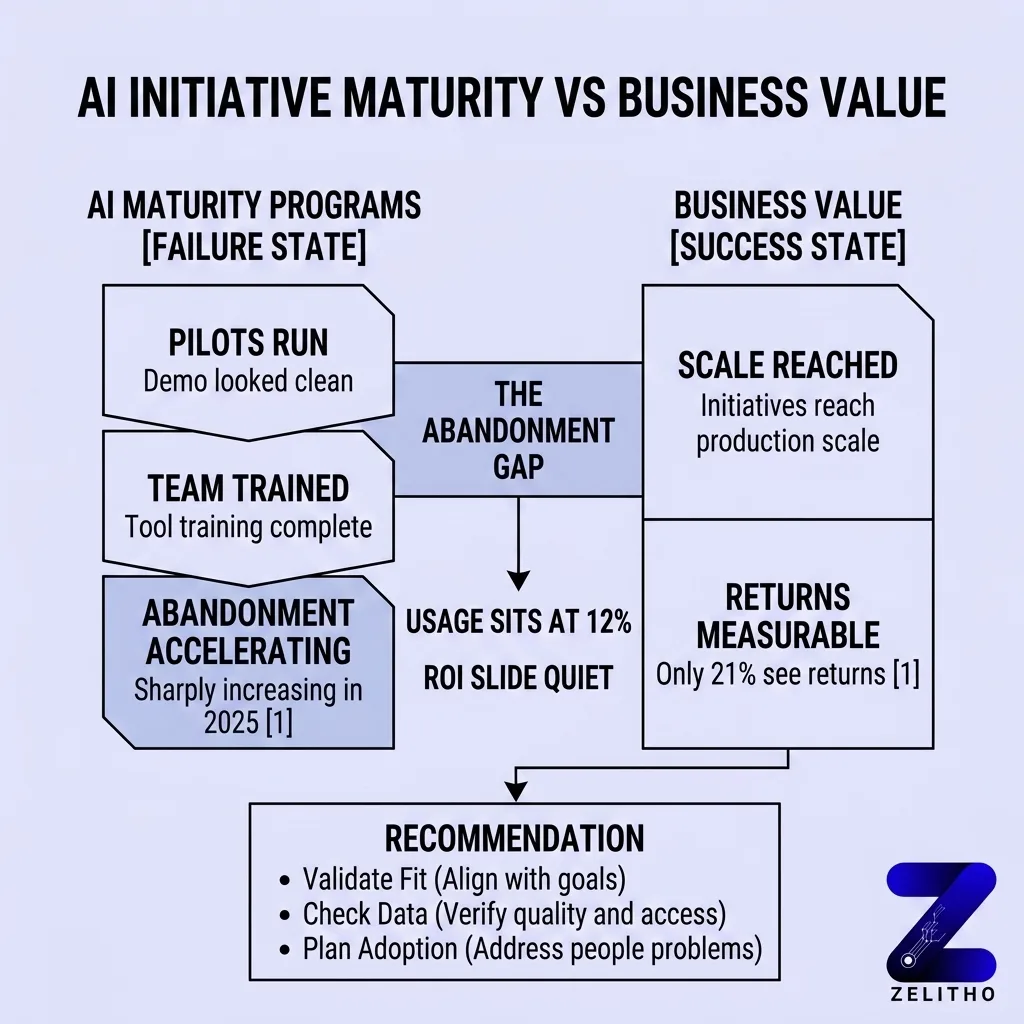

Only 21% of AI initiatives reach production scale with measurable returns [1]. The majority of companies currently running AI tools are not capturing business value from them. The failure is not concentrated in early-stage teams. Companies with mature AI programs still report abandonment rates that accelerated sharply in 2025 [1].

Why 79% of AI Initiatives Never Pay Off , and What the Promotions Leave Out

The sting line first: most AI marketing content is written by the same vendors selling the tools, which means the failure cases are structurally excluded from the content.

Here is what the promotions leave out. 88% of companies use AI regularly, but only 21% of those initiatives reach production scale with measurable returns [1]. That gap is not a rounding error. It is the default condition.

42% of companies abandoned most of their AI initiatives in 2025, up from 17% in 2024 [1]. That abandonment rate more than doubled in one year. This is not a minority outcome from under-resourced teams. 46% of AI pilots get scrapped between proof of concept and broad adoption.

Stop waiting for your pilot to “mature into adoption.” Start treating failure as the baseline probability you are working against.

The promotions show the 5-to-10-user pilot working smoothly [1]. They skip what happens when that pilot tries to reach 40 people across functions, procurement, security review, and training cycles. That is where the 79% disappear.

One concrete signal: if a vendor’s content has no section on what breaks their tool at scale, the content was written for acquisition, not for your success.

The Four Failure Modes: Where AI Marketing Breaks Down Before It Scales

Four documented failure modes explain where marketing AI breaks down before it reaches value. Naming them matters because vague “underperformance” gets blamed on the vendor, the tool, or the team, when the actual mechanism is specific and fixable before you spend.

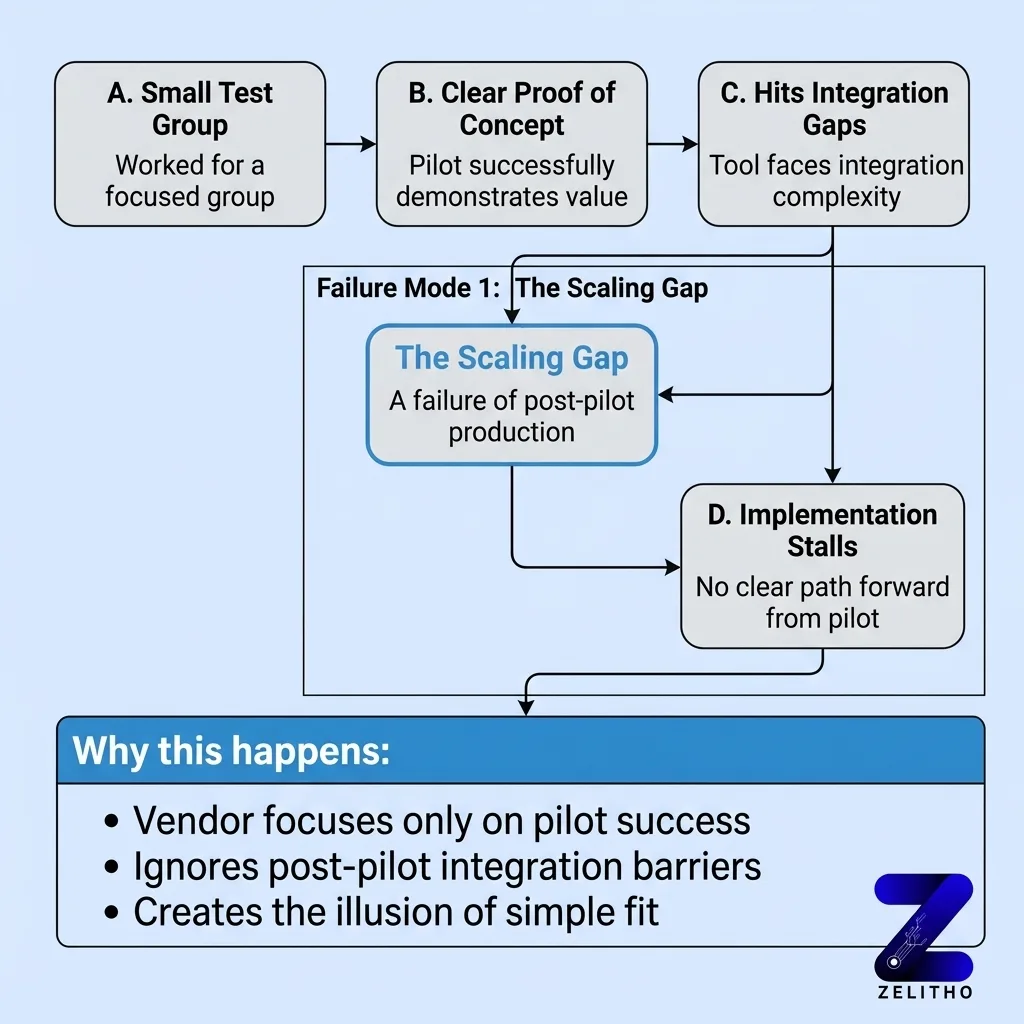

Failure Mode 1: The Scaling Gap

A pilot clears proof of concept. Then it stalls. 95% of enterprise AI pilots are said to fail because of integration gaps [1]. The tool that worked for a small test group hits approval chains, IT dependencies, and workflow conflicts at scale. 88% of companies report regular AI use, while nearly two-thirds have not started enterprise-wide scaling. That gap between “we use AI” and “AI runs in production across the team” is where budget disappears.

Failure Mode 2: Data Unreadiness

65% of organizations either lack AI-ready data or are unsure whether they have it [1]. Over 60% of AI projects are abandoned without AI-ready data. 74% of companies struggle to scale AI value because of weak data foundations. 62% identify data governance as the biggest obstacle [1].

The AI tool is not failing. It is producing outputs from inconsistent, fragmented, or unstructured inputs. Bad inputs do not become good outputs because the model is sophisticated.

A content team trained on a new AI writing platform over three weeks. Eight weeks later, outputs required heavy manual revision on every piece. The issue was not the tool. Their content briefs, brand guidelines, and tone documentation had never been consolidated into a format the system could reference. They fixed the documentation layer. Output revision time dropped by half.

Failure Mode 3: Security Friction

48% of high-maturity organizations name security threats as a top-three barrier [1]. Security review delays last 6 to 12 months in many enterprises. For funded companies and agencies managing client data, this is not a theoretical risk. A tool that clears marketing evaluation gets stopped at IT review for months. The budget cycle closes before approval lands.

Failure Mode 4: Cultural Resistance

54% of executives cite cultural resistance as a top barrier to AI adoption [1]. 46% of workers at companies undergoing AI redesign worry about job security. Organizations with strong change management are 6 times more likely to succeed.

The failure here is not that people refuse to learn the tool. 80% of a team can complete training, 65% can report the tool is useful in a survey, and 12% can still be the actual usage rate three months later [1]. Training attendance and genuine adoption are different metrics. Most implementation plans track the first one and miss the second.

Failure Mode | Primary Signal | Measurable Cost |

|---|---|---|

Scaling Gap | Pilot works, production stalls | 95% enterprise pilot failure rate |

Data Unreadiness | Outputs need heavy revision | 60%+ project abandonment rate |

Security Friction | Tool stuck in IT review | 6-12 month delay cycles |

Cultural Resistance | Training done, usage at 12% | 6x lower success rate without change management |

Each failure mode has a different fix. Diagnosing “AI isn’t working” without naming the mode means the next tool purchase will fail the same way.

The Fit-First Framework: Sorting High-Value AI Uses from Weak Ones Before You Spend

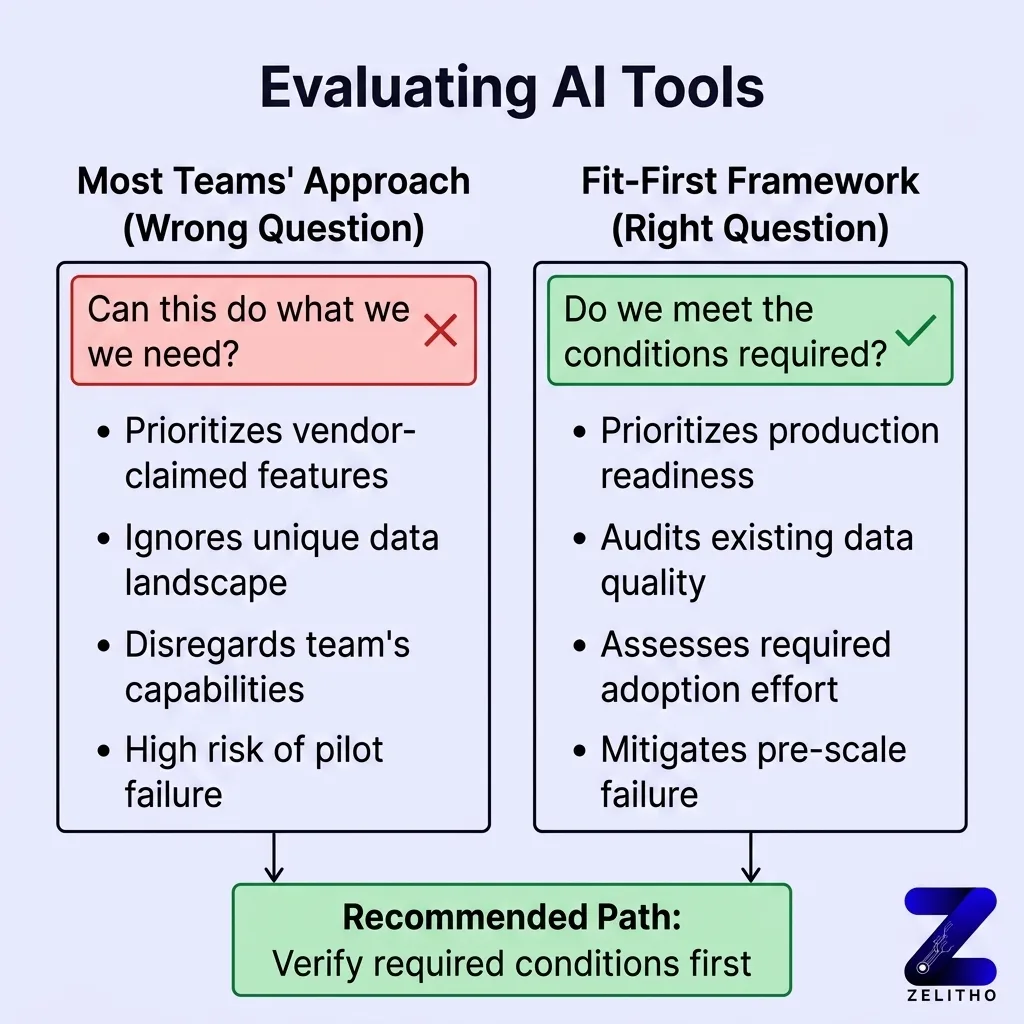

The Fit-First Framework is a sorting system. Its purpose is to eliminate low-fit applications before budget is allocated, not after a pilot collapses.

Most teams evaluate AI tools by asking: “Can this do what we need?” That is the wrong first question. The right first question is: “Do we meet the conditions this tool requires to function at production scale?”

59% of CMOs report insufficient budget to execute strategy [1]. Marketing budgets sit flat at 7.7% of company revenue [1]. A failed AI pilot does not just waste a line item. It consumes budget that had no slack to absorb the loss.

The Fit-First Framework sorts use cases across three criteria before any vendor evaluation begins.

Criterion 1: Repeatability

Is the use case a repeating, structured task or a judgment-intensive, variable one? AI produces consistent returns on repeating tasks with defined inputs. One documented example: a 75% reduction in lead nurture creation time from a pilot where the inputs were templated and the output format was fixed [1]. That result depends on structure, not on the AI being powerful.

Apply the Fit-First Framework to that same team’s creative strategy work, and the result degrades. The inputs are variable. The judgment requirements are high. The tool is not a fit for that task.

Criterion 2: Data Availability

Does your team have clean, consolidated source material the tool can work from? Brand voice documentation, style guides, approved messaging, and audience segments must exist in a usable format before the tool is purchased.

If the answer is “we have it but it is scattered,” the first investment is documentation consolidation, not AI tooling.

Criterion 3: Integration Dependency

How many other systems does this tool need to connect to? Every additional integration adds security review surface, IT dependency, and failure points. When secure pathways replace approval bottlenecks, output velocity can increase by 10x [1]. That gain disappears if the integration never clears the review queue.

Use the Fit-First Framework as a pre-purchase filter, not a post-launch retrospective.

What Readiness Actually Looks Like , and the Signals That Tell You If Your Team Qualifies

Readiness is not a feeling. It is a set of operational conditions, and most teams overestimate theirs.

Organizations with AI-ready data report a 26% improvement in business outcomes [1]. That figure is not about having more data. It is about having structured, governed, and accessible data. 62% of companies name data governance as their biggest obstacle [1]. Governance means documented ownership, consistent formatting, and clear access rules. If your team cannot answer who owns each data source and how it is maintained, you do not have AI-ready data yet.

Security readiness signal: Has your organization completed a vendor security review in the last 12 months for a comparable SaaS tool? If that review took longer than 90 days, assume the same timeline applies here. Plan your pilot start date accordingly. If the pilot window closes before approval lands, the initiative fails for process reasons, not performance reasons.

Team readiness signal: 46% of workers at companies actively redesigning around AI report job security concerns [1]. That number drops to 34% at companies with less advanced AI programs [1]. The gap tells you something specific. The more visibly your team restructures around AI, the more actively you need to address the job security question. Teams that receive no direct communication about role changes self-generate the worst-case version. That anxiety shows up as 12% usage rates on tools that 80% of the team trained on [1].

Adoption readiness signal: Do you have a named person responsible for adoption, not deployment? Deployment means the tool is live. Adoption means usage meets the threshold required for the ROI model to work. These are different accountabilities. If the same person owns both, adoption typically loses to deployment pressure.

The 26% outcome improvement tied to AI-ready data is not available to teams that skip the readiness audit [1]. There is no shortcut through this step that preserves the downstream result.

Organizations with strong change management succeed at AI implementation at 6 times the rate of those without it [1]. Change management is not a workshop. It is a standing communication protocol, a clear role narrative, and a feedback loop that surfaces resistance before it becomes abandonment.

Read More: How to Use AI in Your Marketing Strategy: Goals, Tools, and Implementation Considerations

What to Demand from AI Vendors Before You Sign Anything

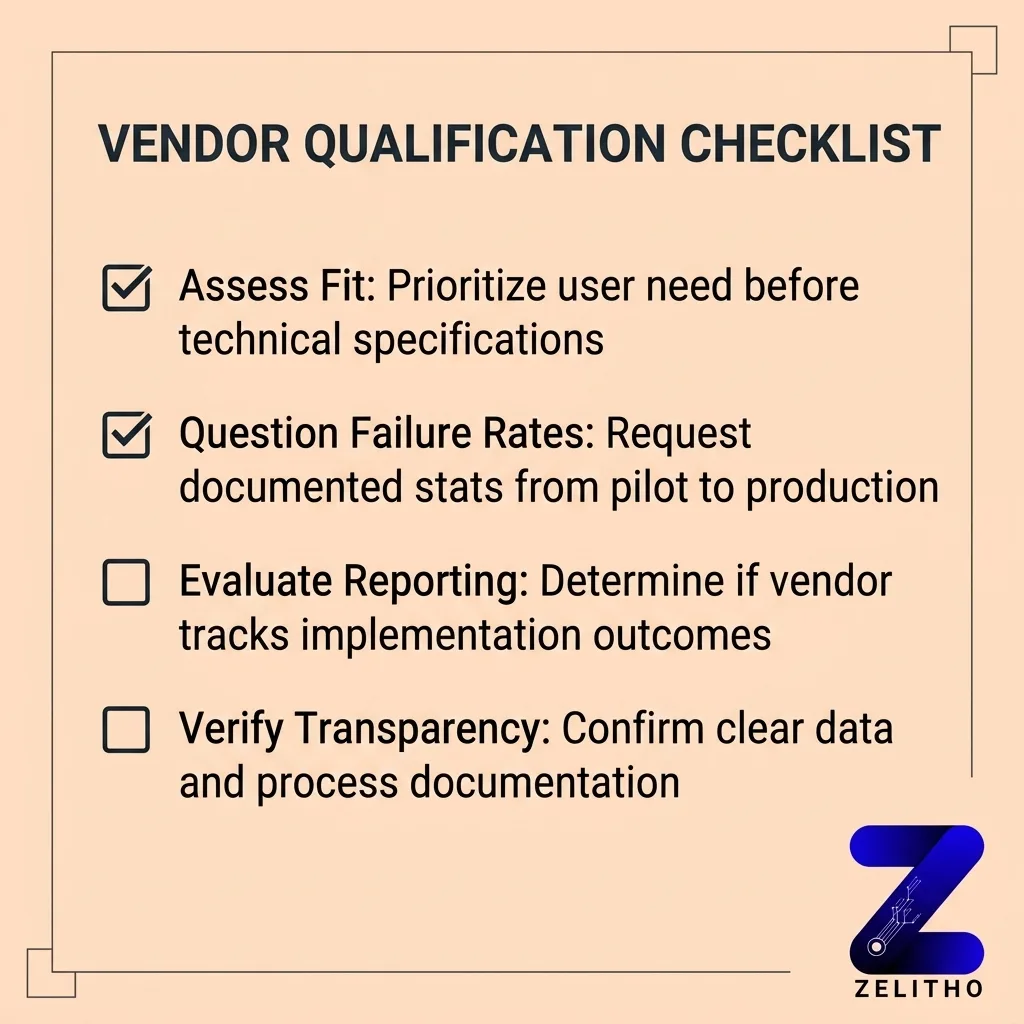

Run the Fit-First Framework against every vendor conversation before you enter a demo.

Ask for their documented failure rate between pilot and production. If they do not have the number, they have not tracked it. If they have tracked it and will not share it, the number is bad. 46% of AI pilots get scrapped between proof of concept and adoption [1]. A vendor without a position on this statistic is not a serious implementation partner.

Ask what data format their tool requires as input. Get the technical spec in writing before the pilot begins. If your current documentation does not match that spec, name that as a pre-pilot work item with a time estimate attached.

Ask for the full security review checklist on day one. Security review delays run 6 to 12 months at enterprise scale [1]. If you are at a funded company with a real IT function, this timeline is your timeline unless you negotiate it into the contract terms.

Ask what change management resources they provide after deployment. Training attendance is not adoption. If the vendor measures success by training completion rates, you are measuring the wrong thing from the start.

The Fit-First Framework protects budget. It does not guarantee success. It eliminates the category of failure that comes from buying a tool before confirming the conditions for it to work.

Demand the failure data. Then decide.

FAQ

The 30% rule suggests that AI tools should handle no more than 30% of a given workflow without human review in the loop. It is a rough operational guideline, not a documented standard. The logic is that outputs beyond that threshold without oversight accumulate compounding errors that become expensive to correct downstream.

95% of enterprise AI pilots fail because of integration gaps between the tool and existing systems [1]. The failure is rarely the model itself. It is the combination of incompatible data formats, security review delays, and workflow dependencies that prevent the tool from reaching production scale.

The four documented failure modes are the scaling gap, data unreadiness, security friction, and cultural resistance [1]. Each one operates independently. A team can have clean data and still fail because of cultural resistance. Diagnosing “AI isn’t working” without naming the specific mode leads to repeated budget loss.

Marketing is not dying. The roles that rely on repeating, templated, low-judgment tasks are contracting. Roles that require audience interpretation, strategic positioning, and editorial judgment are holding or growing. The threat is to specific task categories, not to the function as a whole