How to Use AI in Your Marketing Strategy: Goals, Tools, and Implementation Considerations

TL;DR

You approved the demo. The vendor showed you a polished workflow. Now you have a subscription, three onboarding calls scheduled, and no clear answer to the question your CFO will ask: what does this fix?

Most marketing teams adopt AI tools before naming the problem the tool should solve. That sequence produces low adoption, wasted budget, and outputs no one trusts. The tool becomes a line item instead of a lever.

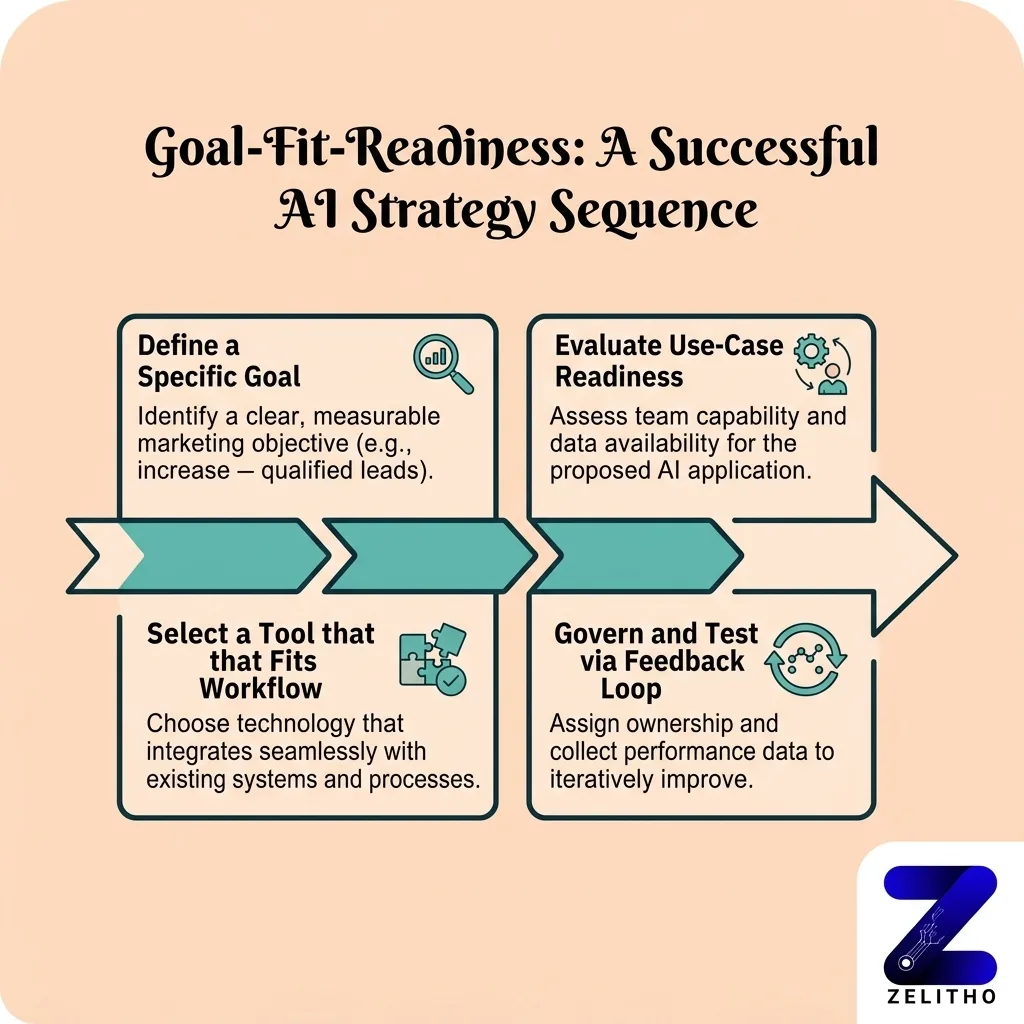

This article applies the Goal-Fit-Readiness framework to reverse that sequence. You anchor AI to a specific business goal, audit your data and team capability, then select tools. Senior marketers, founders scaling content operations, and agency owners managing client programs will leave with a decision path that connects every AI use case to a measurable outcome, not a feature list.

What Does It Actually Take to Use AI Effectively in a Marketing Strategy?

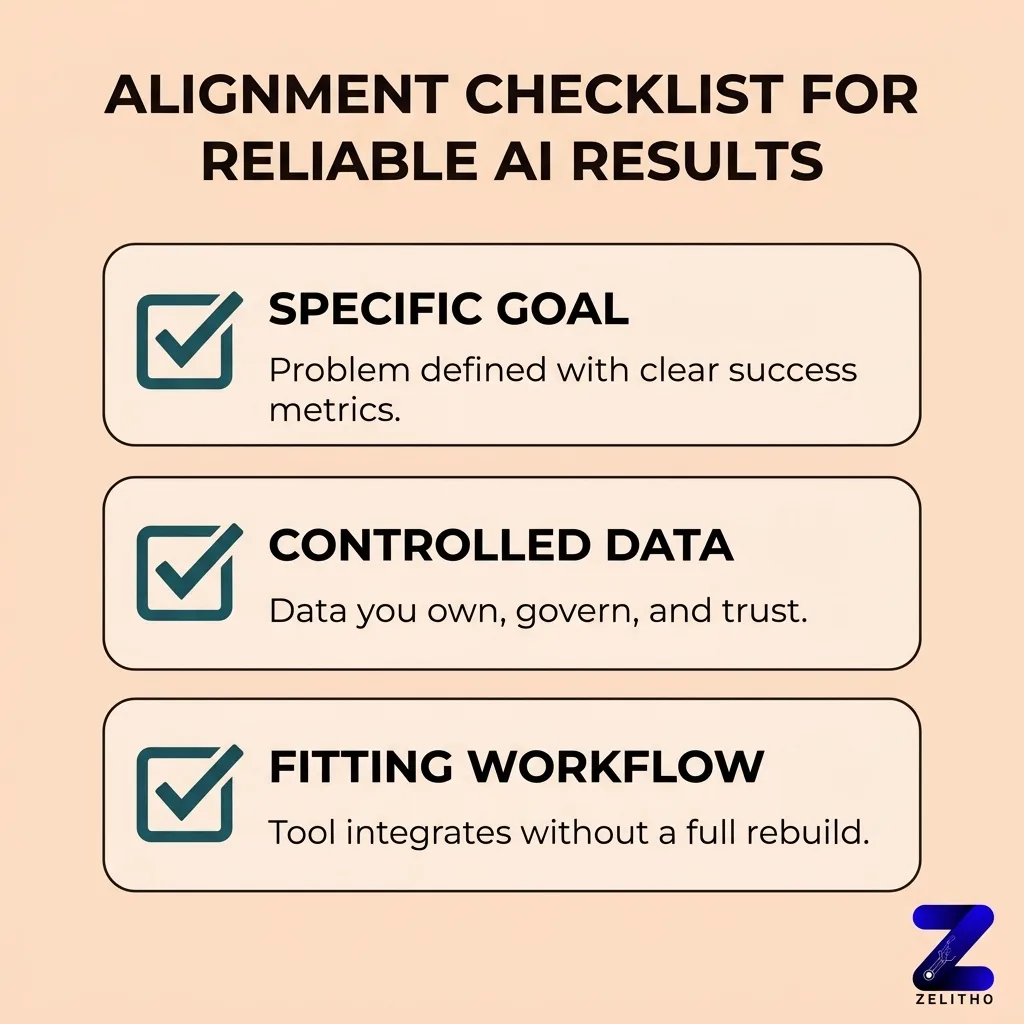

Three things must align before AI produces reliable results in a marketing program: a specific goal, data your team actually controls, and a workflow the tool can slot into without rebuilding everything around it. Miss one, and the tool runs in isolation. Miss two, and you have an expensive experiment with no owner.

Start With the Goal, Not the Tool: How to Select AI Use Cases That Deliver Business Value

Most teams enter AI adoption through a vendor demo, not a strategy conversation. That is the mistake.

Harvard Business Review research identified more than 400 advanced AI marketing use cases [4]. That number is not an opportunity catalog. It is a selection problem. Without a goal filter, any of those 400 use cases can look relevant. Most of them are not relevant to your program right now.

AI marketing investment is growing at 33% per year [3]. That growth accelerates pressure to adopt. Teams that respond to that pressure by evaluating tools first will spend budget before they have a measurable outcome to test against.

Stop evaluating AI by feature lists. Start with a one-sentence goal statement that names a measurable outcome.

The Goal-Fit-Readiness framework gives every AI decision a fixed starting point. Goal comes first. Fit, which is whether a use case matches your data and team, comes second. Readiness, meaning governance and workflow conditions, comes third. Tools come last.

Three goal categories cover most marketing AI use cases: customer acquisition, customer retention, and operational efficiency. Each maps to different AI capabilities.

Goal Category | Representative Use Case | AI Capability Required |

|---|---|---|

Customer Acquisition | Predictive lead scoring | Pattern recognition on CRM and behavioral data |

Customer Retention | Personalized re-engagement sequences | Segmentation model plus content generation |

Operational Efficiency | Automated content brief creation | Language model with defined output templates |

Before any tool evaluation begins, your team writes one sentence: “We want to [measurable outcome] within [timeframe] by improving [specific function].” That sentence is the filter. Every tool that does not serve that sentence gets removed from the evaluation list.

AI adoption is not a technology decision. It is a prioritization decision with a technology layer attached.

What Your Data and Team Actually Need to Support Before Any AI Tool Goes Live

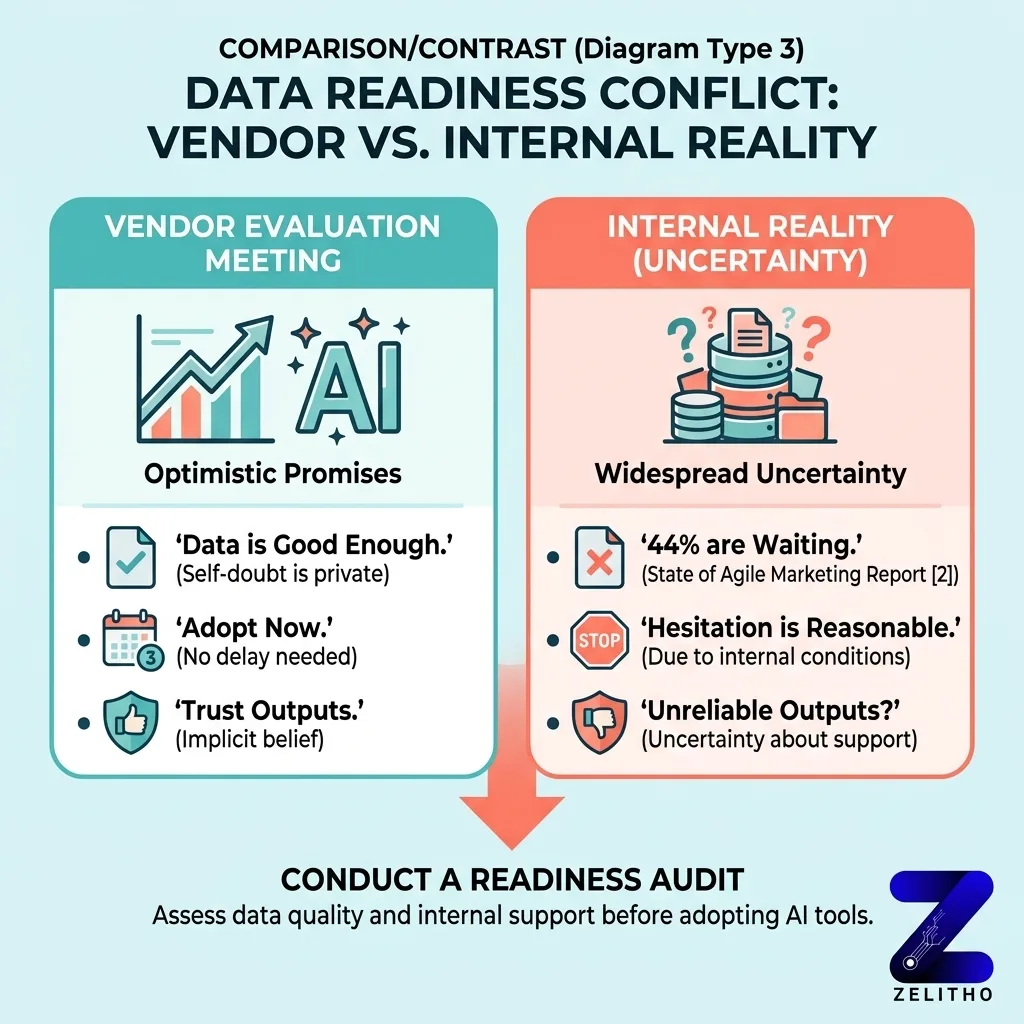

Here is what no one says in the vendor evaluation meeting: most teams are privately uncertain whether their data is good enough to support what the tool promises.

That uncertainty is widespread. According to the 9th annual State of Agile Marketing Report, 44% of companies are waiting for more established AI solutions before adopting [2]. The hesitation is not lack of interest. It is a reasonable response to uncertainty about whether internal conditions can support reliable outputs.

The readiness audit has three columns: Data Quality, Team Capability, and Governance. Each column carries a pass threshold. Advancing a use case before passing all three produces outputs that look functional but behave unpredictably at scale.

A 360-degree customer view is the data condition that makes AI-driven personalization viable [1]. Without unified, clean data across acquisition, engagement, and transaction signals, personalization outputs reflect gaps in the data, not gaps in customer behavior. The model cannot compensate for missing inputs.

A team at a funded B2B company ran a dynamic email personalization program for six weeks before anyone audited the contact data feeding it. Thirty-one percent of the records were missing the engagement signal the model used to select content variants. The program appeared to run. Performance sat flat. When the data issue was corrected and the model re-run, reply rates moved within two weeks. The tool was never the problem.

Governance conditions are not IT checkboxes. Multifactor authentication and data loss prevention are the controls that determine whether AI-generated outputs can be trusted and distributed at scale [1]. If your output process bypasses those controls, you are publishing at risk, not at speed.

Three-column readiness checklist before any use case advances:

Readiness Column | Pass Condition | Common Gap |

|---|---|---|

Data Quality | Unified, deduplicated, source-verified | Siloed CRM and ad data with no join key |

Team Capability | Named owner who interprets model output | Tool assigned to a workflow with no analyst |

Governance | MFA enforced, DLP policy active on output channels | Outputs shared via uncontrolled channels before review |

Treating tools as the entry point to AI adoption is the single most common and most costly mistake in marketing AI implementation. This article rejects that framing entirely.

Tool Selection, Workflow Integration, and Channel-Specific Application: A Criteria-First Approach

By early 2025, 69.1% of marketers had incorporated AI into their strategies [2]. The discovery problem is solved. The evaluation problem is not.

With most marketers already using some form of AI, the challenge is no longer finding tools. It is applying criteria that prevent you from adding tools that conflict with your stack, duplicate capabilities you already own, or require data access your governance policy does not permit.

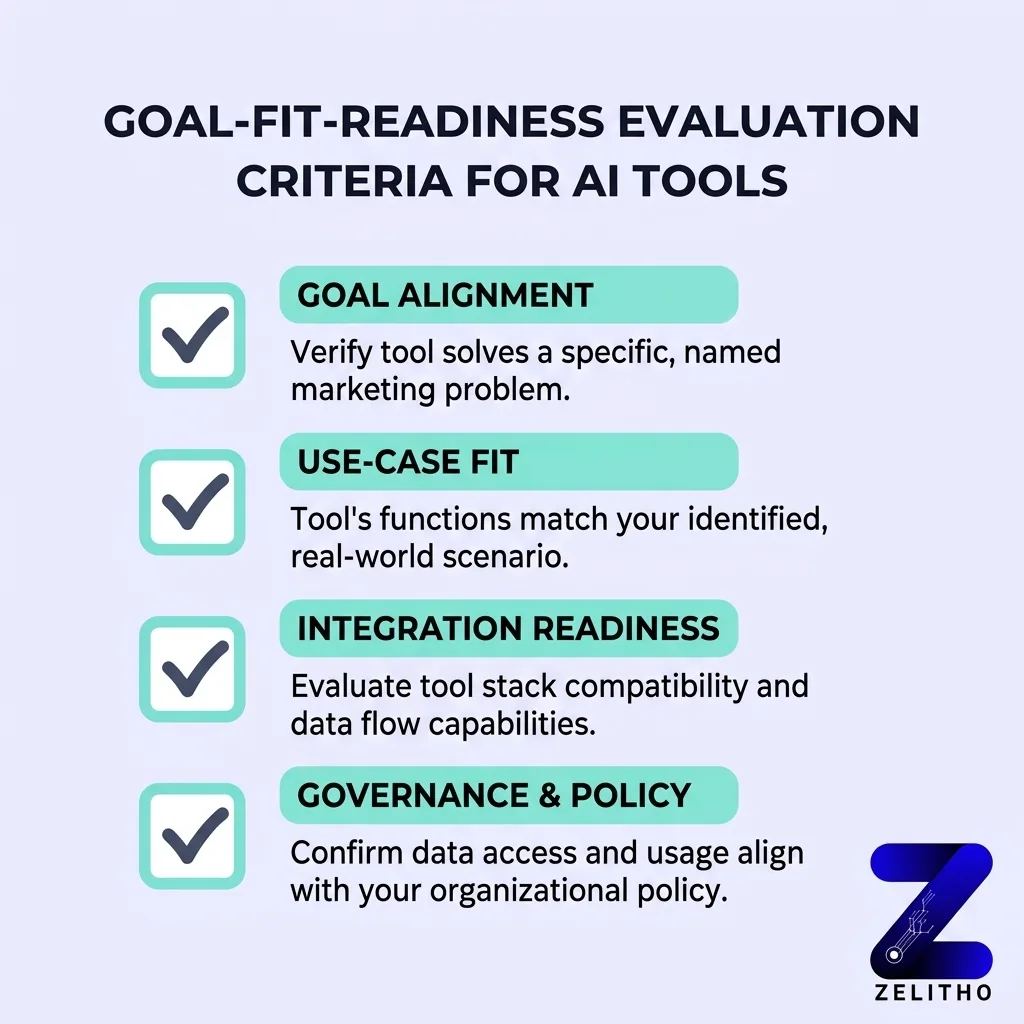

Four criteria govern every tool evaluation under the Goal-Fit-Readiness framework. First, goal alignment: does this tool directly serve the one-sentence goal statement from section one? Second, integration depth: does it connect to your existing stack without a custom build? Third, data access requirements: does it need data you control and can pipe cleanly? Fourth, output auditability: can a human reviewer trace how the output was generated?

Tools that pass all four get evaluated. Tools that fail any one get removed before the demo is booked.

Channel mapping matters. Different AI tool categories serve different stages of the marketing workflow.

Tool Category | Channel Application | Workflow Stage |

|---|---|---|

Predictive analytics | Paid acquisition, lead scoring | Pre-campaign |

Content generation | Owned media, SEO, email | Pre-campaign and in-flight |

Dynamic personalization engine | Email, web, in-app | In-flight |

A/B testing and optimization tools | Conversion, landing pages | In-flight and post-campaign |

Every tool under evaluation gets assigned to a specific workflow stage before any contract discussion begins. Pre-campaign, in-flight, or post-campaign. If the stage is unclear, the use case is not ready to evaluate.

Achieving 1:1 personalization at scale requires the tool to connect to a clean data layer and a governed output process. Deploying a personalization engine without those two conditions produces content that is technically dynamic but strategically random.

What to look for in a vendor evaluation: defined API access to your existing CRM, documented data retention and deletion policy, output logs accessible to your team, and a named integration point with your email or CMS platform. What to ignore: case studies from industries unrelated to your use case, feature roadmap slides, and benchmark claims without methodology attached.

Implementation Without a Feedback Loop Is Just Deployment: How Iterative Testing Closes the Gap

Deployment is not implementation. This distinction costs teams months of usable signal.

A/B testing is not a post-launch optional step [1]. It is the mechanism that converts AI output from a hypothesis into a validated result. Without a defined test cadence, the model runs against assumptions that may no longer reflect current customer behavior. Six weeks of deployment without a review cycle means the model is optimizing toward signals from a customer cohort that may have already shifted.

1:1 personalization at scale is not a product feature. It is a system output that depends on continuous signal input, output review, and model adjustment. Run it without those three, and you are scaling guesses.

The minimum viable feedback loop has three checkpoints. Input signal audit: are the data inputs still clean and current? Output performance review: are the outputs moving the metric tied to your goal statement? Model adjustment checkpoint: has anything in customer behavior or channel performance shifted since the last review?

This is the Goal-Fit-Readiness loop. It is the operational mechanism that keeps the framework active after launch.

Human oversight is the control layer that catches model drift, bias amplification, and channel-level performance degradation before they compound. That oversight requires a named owner, not a shared team responsibility. Shared responsibility produces gaps between review cycles.

Every AI use case that goes live must have one named owner responsible for the feedback loop cadence. Not responsible for the initial deployment. Responsible for the ongoing review interval. That owner sets the schedule, runs the input audit, reviews outputs against the goal metric, and flags when the model needs adjustment.

No use case goes live without a defined review interval assigned to a specific person.

The Goal-Fit-Readiness Framework Is the Operating Logic, Not the Starting Checklist

The Goal-Fit-Readiness framework is not a checklist you complete once. It is the operating logic that determines whether AI adds leverage to your marketing system or adds complexity to it. Every AI use case in your strategy should be traceable back to a specific goal, evaluated against your actual data and team capability, matched to a tool that fits your workflow, and governed by a feedback loop with a named owner. That sequence, goal before tool, readiness before deployment, testing before scale, separates marketing teams that get durable returns from AI from teams that accumulate subscriptions. Start with what you are trying to change. Let that drive everything else.

FAQ

Goal-Fit-Readiness is a three-stage decision sequence for AI adoption in marketing. You define a specific measurable goal first, then evaluate whether your data and team capability fit the use case, then assess governance readiness. Tools are selected after all three stages pass, not before.

Most implementations fail because teams select tools before naming the problem the tool should solve. The result is a tool running against unclear goals with inconsistent data. The output looks functional but does not move a trackable metric.

Your data is ready when it is unified across acquisition, engagement, and transaction sources, deduplicated, and source-verified. A 360-degree customer view is the baseline condition for reliable personalization output. Partial or siloed data produces personalization that reflects data gaps, not customer behavior.

Write one sentence naming a measurable outcome, a timeframe, and the specific function the tool would improve. Run the three-column readiness audit covering data quality, team capability, and governance. If any column fails its pass threshold, resolve the gap before evaluating tools.

Every AI use case should have a defined review interval assigned to a named owner before it goes live. At minimum, run an input signal audit, an output performance review, and a model adjustment checkpoint. Six weeks without a review cycle is long enough for model drift to affect results.

References and Citations

[1]https://www.concordusa.com/blog/what-you-need-to-know-before-adding-ai-to-your-marketing-strategy

[2]https://www.agilesherpas.com/blog/implementing-ai-in-marketing

[3]https://supermetrics.com/blog/how-to-use-ai-in-marketing

[4]https://hbr.org/2021/07/how-to-design-an-ai-marketing-strategy