Top Semantic SEO Strategies to Rank Higher in 2026

Note: This is Part 3 of our series on How to Use Semantic SEO and Natural Language Processing to Boost Rankings. You can find the other Parts towards the end of this page. Happy Reading 🙂

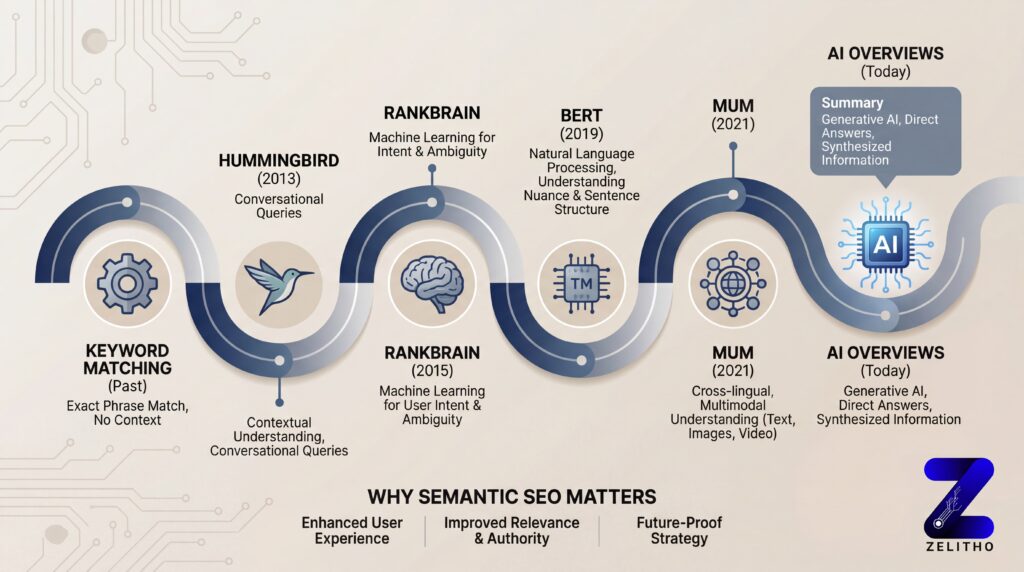

Key Google Algorithm Updates That Shaped Semantic SEO

Google’s evolution toward semantic search didn’t happen overnight. A series of major algorithm updates progressively shifted search from keyword matching to meaning understanding. Understanding this history clarifies why semantic SEO isn’t just an advanced tactic—it’s the foundation of how modern search works.

Hummingbird (2013): The Shift to Search Intent

Google’s Hummingbird update, released in 2013, represented the first complete algorithm overhaul since 2001. Hummingbird changed over 90% of all searches by introducing natural language processing and semantic understanding to interpret entire queries rather than isolated keywords1.

Before Hummingbird, Google primarily matched keywords in queries to keywords on pages. After Hummingbird, Google began understanding the *intent* behind searches. A query like “Where can I find good tacos near me” would now trigger results about nearby taco restaurants with good reviews—not just pages containing the words “find,” “good,” “tacos,” and “near.”

This update marked the beginning of semantic search and signaled that content creators needed to focus on answering user questions comprehensively rather than simply including target keywords repeatedly.

RankBrain (2015): Machine Learning Meets Search

Introduced in 2015, RankBrain brought machine learning directly into Google’s ranking algorithm2. RankBrain’s primary function is interpreting queries Google has never seen before—initially about 15% of all searches, though machine learning now influences all searches.

RankBrain learns patterns from billions of searches to understand what users actually want. If users searching “best budget laptop for students” consistently click on results about affordable, portable computers with long battery life, RankBrain learns this pattern. The next time someone uses similar language or searches related concepts, RankBrain applies this learned understanding to deliver better results.

For semantic SEO, RankBrain reinforced that comprehensive topic coverage performs better than keyword optimization. If your content thoroughly addresses a topic, it naturally becomes relevant for the semantic variations and related queries RankBrain connects to that topic.

BERT (2019): Natural Language Understanding Breakthrough

BERT (Bidirectional Encoder Representations from Transformers), introduced in 2019, dramatically advanced Google’s natural language understanding3. The key innovation: BERT processes words bidirectionally—considering all words in a sentence simultaneously rather than reading left-to-right sequentially.

This bidirectional analysis allows BERT to understand context and nuance. In the query “2019 brazil traveler to usa need a visa,” BERT understands that “to” is critical—the person is traveling *to* the USA, not from Brazil to somewhere else. Pre-BERT, Google might have missed this distinction and returned results about Americans traveling to Brazil.

Initially affecting about 10% of searches, BERT’s impact has expanded as Google refined the model. For content creators, BERT emphasized that natural, contextually rich writing performs better than keyword-stuffed text. Your content must read naturally while comprehensively covering topics—exactly what semantic SEO delivers.

MUM (2021): Multitask Unified Model

Google’s Multitask Unified Model (MUM), released in 2021, represents a quantum leap in semantic understanding. MUM is reportedly 1,000 times more powerful than BERT and can understand information across languages and modalities—text, images, and video1.

MUM’s multimodal capability means it can understand a complex query like “I’ve hiked Mt. Adams, what should I prepare differently for Mt. Fuji?” by analyzing text about both mountains, comparing images of terrain, understanding video guides about hiking conditions, and synthesizing information across languages (since much Mt. Fuji content is in Japanese).

For semantic SEO, MUM reinforces the importance of comprehensive, multi-format content. Your topic coverage should span text, images, video, and other formats—all semantically connected and contextually rich.

AI Overviews and Generative Search (2023‑2024)

Google’s AI Overviews (formerly Search Generative Experience or SGE) represent the latest evolution in semantic search. Announced in May 2023 and officially launched as AI Overviews in May 2024, this feature uses generative AI to synthesize information from multiple sources and present comprehensive answers at the top of search results3.

As of recent data, AI Overviews now appear for approximately 18.76% of keywords in U.S. search results, with 87.6% of AI panels citing content from Position 14.

To be selected for AI Overview citations, your content must be semantically rich, factually accurate, comprehensively cover topics, and demonstrate clear expertise. This makes semantic SEO essential—surface-level, keyword-focused content simply won’t be selected for these prominent placements.

How NLP Powers Modern Search and Content Analysis

Understanding how NLP actually works in search helps clarify why semantic optimization matters. Google’s NLP systems perform sophisticated analysis on both search queries and web content to determine relevance and meaning.

How NLP Analyzes Search Queries and Content

When you enter a search query, Google’s NLP systems immediately begin multi-layered analysis. First, they perform entity extraction—identifying specific entities mentioned in your query. If you search “best Italian restaurants in Chicago,” NLP identifies “Italian restaurants” as a business category entity and “Chicago” as a location entity.

Next comes syntactic analysis, examining sentence structure and grammatical relationships. This reveals how words relate to each other—which are subjects, which are modifiers, what actions are being described. In “restaurants my kids will enjoy,” syntax analysis identifies “kids” as the key constraint and “enjoy” as expressing preference.

Semantic analysis then determines the actual meaning and intent behind your query. NLP considers word meanings in context, identifies synonyms and related concepts, and maps your query to known entities and relationships in the Knowledge Graph.

Finally, sentiment analysis evaluates emotional tone—are you frustrated, excited, uncertain? A query like “help, my laptop won’t turn on” expresses urgency and distress, so Google prioritizes troubleshooting content over general laptop information.

This same multi-layered analysis applies to web content. When Google indexes your page, NLP extracts entities, analyzes syntax and semantic relationships, and builds a comprehensive understanding of what your content means. Semantic SEO works because it creates content that’s easy for NLP systems to understand, interpret, and confidently match to relevant queries.

Entity Recognition and Semantic Indexing

Entity recognition is the process of identifying and categorizing named entities in text. Advanced NLP systems don’t just find entities—they disambiguate them (is “Jordan” a person, a country, or a shoe brand?), classify them (person, place, organization, concept), and map their attributes and relationships.

Once entities are identified, semantic indexing maps how concepts and entities relate to each other. Google builds a semantic index—essentially a map of meaning—that connects related concepts even when they don’t share keywords. This is why searching “car maintenance” can return results about “vehicle servicing” or “automobile upkeep”—the semantic index understands these are related concepts.

For SEO, this means clearly identifying and contextualizing entities in your content. Use specific entity names, provide contextual information that helps Google disambiguate them, and create semantic connections by discussing related entities and concepts together.

Vector Space Analysis and Embeddings

At a technical level, modern NLP uses vector space models and word embeddings to understand semantic similarity. This sounds complex, but the concept is straightforward: algorithms convert words and phrases into numerical representations (vectors) that capture their meaning.

Words with similar meanings have similar vector representations, positioning them close together in mathematical “space.” This allows algorithms to understand that “automobile” and “vehicle” are semantically similar even though they share no letters. It enables matching queries to content based on meaning rather than exact keywords.

For content creators, this reinforces why natural, varied language works better than keyword repetition. Using synonyms and related terms naturally as you explain concepts—they appear automatically when you thoroughly explain ideas.

Source:

1. https://niumatrix.com/semantic-seo-guide/

2. https://searchengineland.com/guide/semantic-seo

3. https://seranking.com/blog/semantic-seo/

4. https://abovea.tech/semantic-seo-guide-2025/

Discover more about – How to Use Semantic SEO and Natural Language Processing to Boost Rankings

Part 1 – What is Semantic SEO?

Part 2 – How Google Uses NLP: BERT, MUM & AI Overviews Explained

Part 4 – Entity SEO & Topic Clusters: The Future of Topical Authority