Marketing Is Changing Fast: AI Is the Reason Most Teams Miss

TL;DR

Your content calendar is full. Your tools are running. Your output is up. And your pipeline still isn’t moving the way your board expects it to.

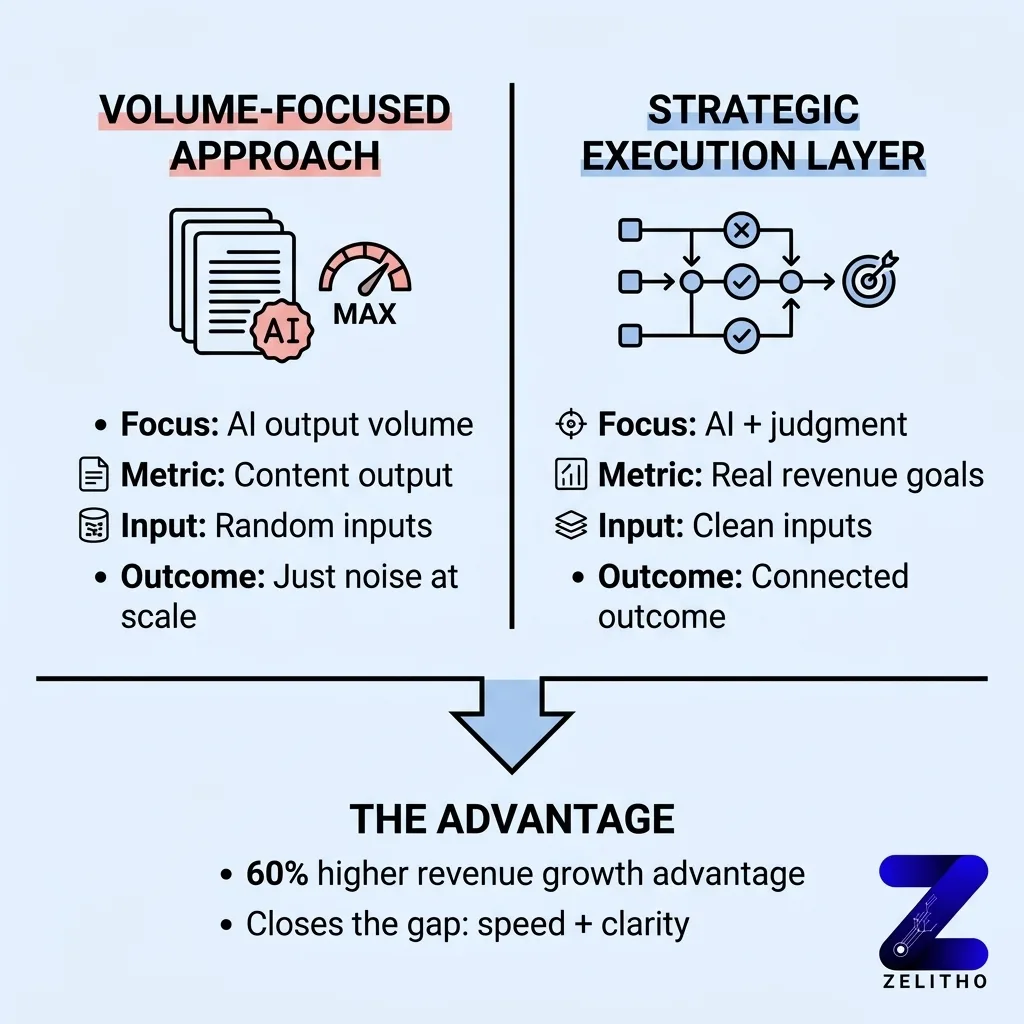

Most teams treat AI adoption as a completion task. They add tools, accelerate output, and measure success by volume. The problem is that volume without a connected outcome is just noise at scale. Ninety-four percent of organizations already use AI in marketing [1], but only about half feel they have adequately adopted it [1]. That gap is not a tool gap. It is a decision gap.

The Strategic Execution Layer is a three-part framework built for senior marketers, founders scaling content, and agency operators managing multiple clients. It corrects the structural failure between AI speed and strategic clarity. It works by demanding clean inputs, tying every automation to a measurable outcome, and preserving human judgment at every decision point.

Why Are Most AI Marketing Strategies Failing Despite High Adoption?

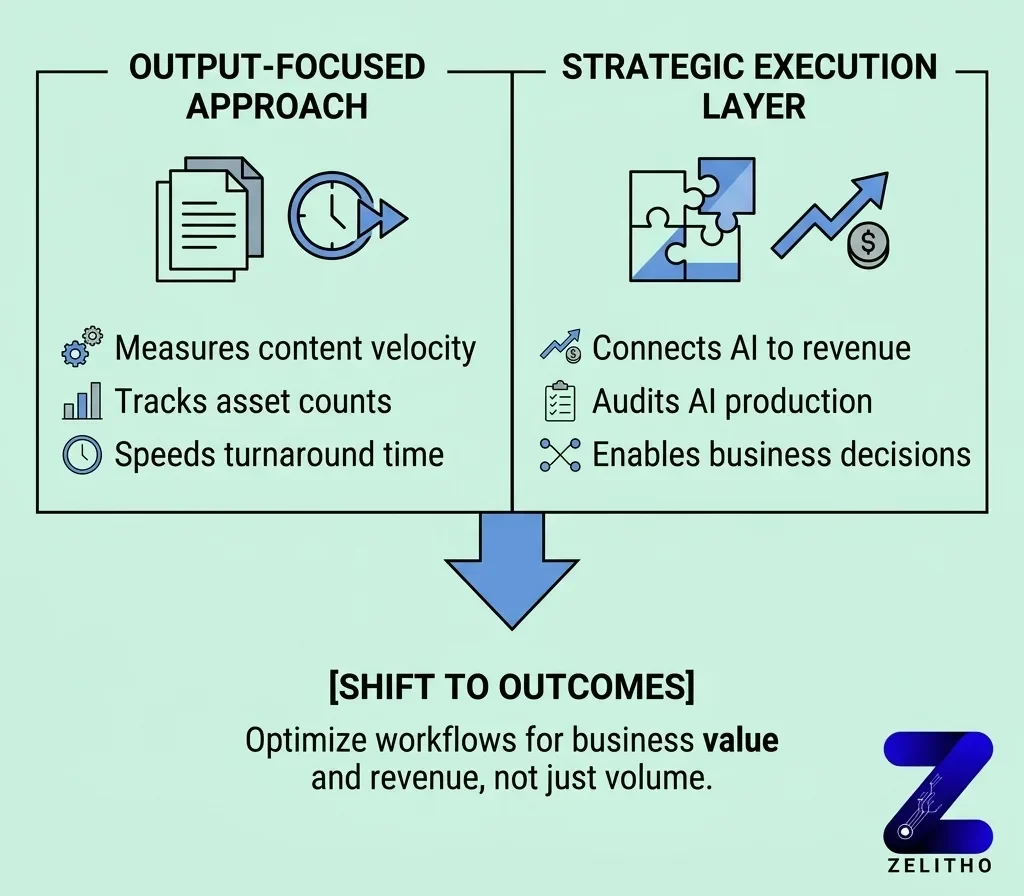

Most AI marketing strategies fail because teams optimize for output, not outcomes. They measure content velocity, asset count, and turnaround time. None of those metrics connect to revenue. The Strategic Execution Layer addresses this directly: audit what your AI is producing, then ask what business decision it supports. If the answer is unclear, the workflow is broken.

Speed Without Strategy Is the Real Failure Mode

You are sitting in a campaign review. The deck shows 47 marketing assets produced in a single session [2]. The team is proud. The tools worked. And then someone asks which asset drove a qualified lead. Silence.

That silence is the failure mode.

Speed is not the problem. Disconnected speed is. When AI accelerates execution without a strategy gate, teams produce more of the wrong thing faster. One reported session generated 47 actionable assets in hours [2]. That is real productivity. But productivity measured in asset count is not a revenue signal.

The sting: you did not fall behind because competitors moved faster. You fell behind because they moved toward something specific and you moved toward volume.

Ninety-four percent of organizations now use AI to prepare or execute marketing activities [1]. The adoption race is over. Everyone is in it. The new gap is between teams that use AI to hit output targets and teams that use AI to hit revenue targets. Those are not the same race.

One agency reported that AI made campaign research ten times faster [1]. That is a real efficiency gain. But faster research only matters if the research question was worth asking. If the research fed a campaign that was not tied to a conversion goal, the speed produced nothing useful.

Stop celebrating output velocity. Start auditing outcome alignment.

That is the first principle inside the Strategic Execution Layer. Every AI-assisted workflow must have a defined business question at its origin. Not a content category. Not a channel. A question with a measurable answer.

You Think You Are Behind on Tools. You Are Actually Behind on Decision Quality.

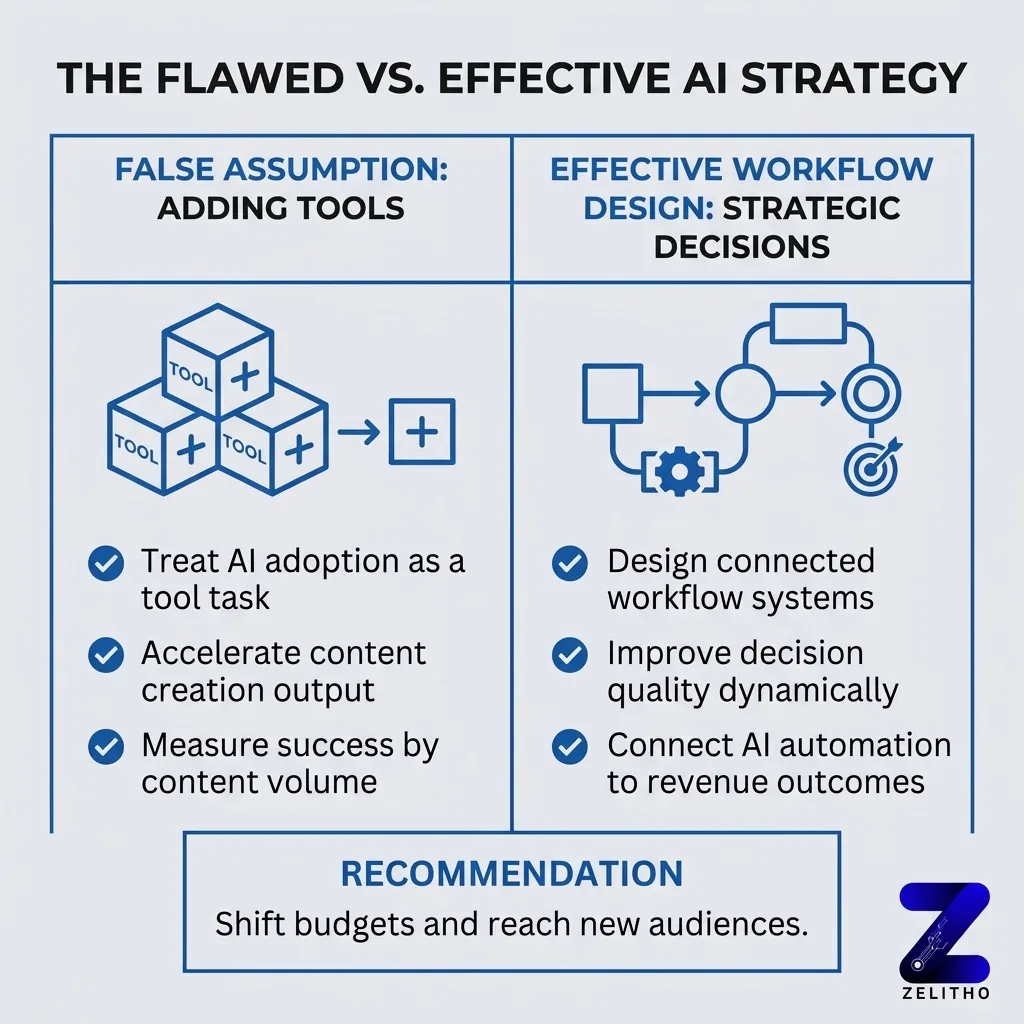

Here is the false assumption most teams carry: adding another AI tool closes the competitive gap.

It does not.

Only about half of marketers feel they have adequately adopted AI, despite nearly all of them using it [1]. That statistic is not a technology problem. It is a workflow design problem. The tools are present. The decisions around them are not.

The top 20% of AI-mature marketing organizations do something specific. They shift budgets dynamically and reach audience segments in real time [1]. That capability is not a feature of any single tool. It is the result of decision infrastructure built around the tools. Clean data inputs, defined triggers, and judgment checkpoints that a human owns.

Most teams skip the judgment checkpoint entirely.

They prompt the AI. They review the output for tone and grammar. They publish. No one asks: does this output serve the specific conversion goal we set for this quarter? No one compares the asset to the audience segment it was meant to move.

The friend advice version of this: stop buying tools that solve for speed. Start designing checkpoints that solve for direction.

Here is what that looks like in practice. Before any AI-assisted content goes to production, one person answers three questions. What decision does this content support? Which audience segment does it address? What does a successful outcome look like in 30 days? If no one can answer all three, the content does not move forward.

That is a decision-quality gate. It costs zero budget. It requires no new tool. It requires discipline.

The gap between AI adoption and AI results is a decision-quality problem. Teams that close it do not do so by purchasing a better platform. They do it by building better questions into every step of the workflow.

What AI-Mature Teams Do That Everyone Else Ignores

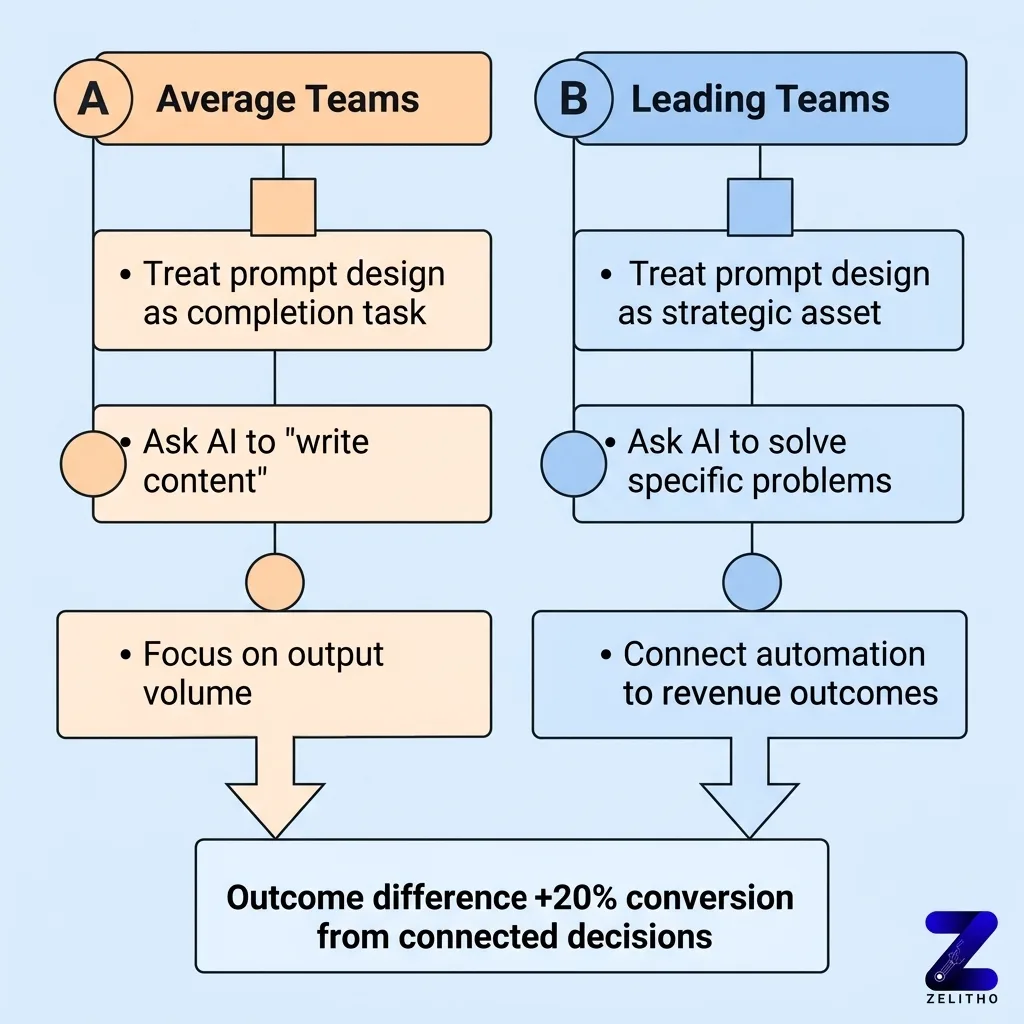

The behavioral difference between leading teams and average teams is not the sophistication of their tools. It is the structure of their inputs.

AI-mature organizations treat prompt design as a strategic asset. They do not ask AI to write content. They ask AI to solve a specific problem for a defined audience at a specific stage of a buying decision. That distinction changes the output entirely.

AI-based personalization tied to specific content decisions is associated with a 20% increase in sales conversions on average [1]. That number does not come from using AI more often. It comes from using it more precisely. The input defines the output. Garbage in, generic out.

Behavior | Average Teams | AI-Mature Teams |

|---|---|---|

Input design | Topic or keyword | Audience, stage, and goal |

Output review | Grammar and tone check | Outcome alignment check |

Measurement | Volume of assets published | Conversion rate per asset |

The table above is not aspirational. It is diagnostic. If your team sits in the left column, your AI is producing noise faster than before.

Here is the case moment: one marketing team running a B2B SaaS product used AI to generate 30 blog posts in a quarter. Traffic was up. Leads were flat. They audited 30 posts and found 22 addressed topics their ideal customer profile never searched. They rebuilt their input process with a positioning brief required before each prompt. The next quarter, they produced 12 posts. Pipeline contribution doubled.

We saw volume without direction. We added an input gate. Revenue signal appeared.

AI-mature teams also protect one thing rigorously: human judgment at inflection points. They use automation to scale execution. They use people to make calls about direction, audience fit, and message positioning. The automation does not make those calls. A person does, with AI-generated data to support the decision.

Leading marketers using AI with this structure have achieved 60% greater revenue growth than peers [1]. That number is the consequence of structural difference, not tool difference.

The Strategic Execution Layer: A Framework for Connecting Automation to Outcomes

The Strategic Execution Layer has three components. They are not sequential phases. They operate simultaneously inside every workflow.

Component 1: Clean Inputs

Every AI-assisted workflow starts with a brief. The brief answers four questions. Who is the audience? What decision are we supporting? What does a measurable win look like? What constraint or objection does this content address? Without a brief, the AI produces generic output. With a brief, it produces directional content.

Automation software can raise sales productivity by 14.5% and reduce marketing overhead by 12% [1]. Those gains do not appear automatically. They appear when the automation is fed clean inputs and pointed at specific tasks. A poorly designed prompt feeding an automated sequence produces scaled mediocrity.

Component 2: Outcome-Tied Automation

Every automated process must connect to a named outcome before it runs. Not a content format. Not a channel. An outcome. “This email sequence exists to move prospects from consideration to demo request within 14 days.” That sentence changes every decision made inside the automation.

AI content tools save approximately three hours per piece of content and 2.5 hours per day [1]. That time savings only converts to business value when the content produced moves a measurable metric. If the saved hours produce content that does not convert, the team is simply spending less time on activities that do not matter.

Component 3: Human Judgment at Decision Points

The Strategic Execution Layer requires a human checkpoint at three moments: before the workflow launches, at the midpoint review, and at the output audit. These checkpoints are not approvals. They are directional questions. Is this still aimed at the right outcome? Has anything changed in the audience or the market that the AI does not know?

AI does not know your sales team had a conversation last week that reframed the buying objection. A human does. That information belongs at the checkpoint, and it changes the output.

This is where most teams fail. They design the workflow, launch it, and check back at the end of the month. By then, the content has run, the budget is spent, and the direction was wrong for three weeks.

The Strategic Execution Layer is not a technology investment. It is a workflow discipline. Teams apply it at the campaign level first. Pick one active campaign. Identify where output is being produced without a connected outcome. Redesign that step with a clean input brief, a named outcome, and a human checkpoint. Measure the difference over 30 days.

People also read : What Is AI in Digital Marketing? Use Cases, Benefits, Challenges, and Tools

The Strategic Execution Layer Is Not the Last Step. It Is the Missing One.

The Strategic Execution Layer is not a technology investment. It is a discipline. Teams that close the gap between AI speed and strategic clarity do not do so by adding more tools. They do it by designing better decision points, demanding cleaner inputs, and measuring outputs against goals rather than volume. The 60% revenue growth advantage held by AI-mature marketing organizations is not a product of automation alone. It is the product of automation paired with judgment. Apply the Strategic Execution Layer to your current workflow. Start with one campaign. Identify where output is being produced without a connected outcome. That is where the miss is happening.

FAQ

The Strategic Execution Layer is a three-part framework for connecting AI-assisted marketing workflows to measurable business outcomes. Its components are clean inputs, outcome-tied automation, and human judgment at decision checkpoints. It is applied at the campaign level and requires no new tools.

Most fail because teams measure output volume instead of outcome alignment. AI accelerates execution, but if the execution is not pointed at a specific business goal, the acceleration produces nothing useful. The failure is structural, not technological.

AI-mature teams design inputs before launching any workflow. They tie every automation to a named outcome. They keep human judgment active at inflection points. Average teams prompt AI, review tone, and publish. The difference is discipline around decision-making, not access to better tools.

Start with one campaign in one week. Audit the inputs, name the outcome, and identify where human judgment is currently absent. The first application takes one focused session. The discipline compounds over subsequent campaigns.

No. A founder running a lean operation can apply it alone. It requires one brief per workflow, one named outcome per campaign, and one human review at the midpoint. The structure scales up or down based on team size.

References and Citations

[1]https://www.techclass.com/resources/learning-and-development-articles/how-marketing-teams-are-using-ai-to-work-smarter-not-harder

[2]https://chandlernguyen.com/blog/2026/03/21/why-most-ai-marketing-tools-feel-fast-but-weaken-team-judgment/