How to Audit Your GEO Readiness: A Checklist for AI-Era SEO and Content Marketing

TL;DR

• You ranked page one on Google. You queried your product category in ChatGPT. Your brand wasn’t mentioned once. That gap is not a content volume problem. It’s a structural one, and it’s already costing you citation share.

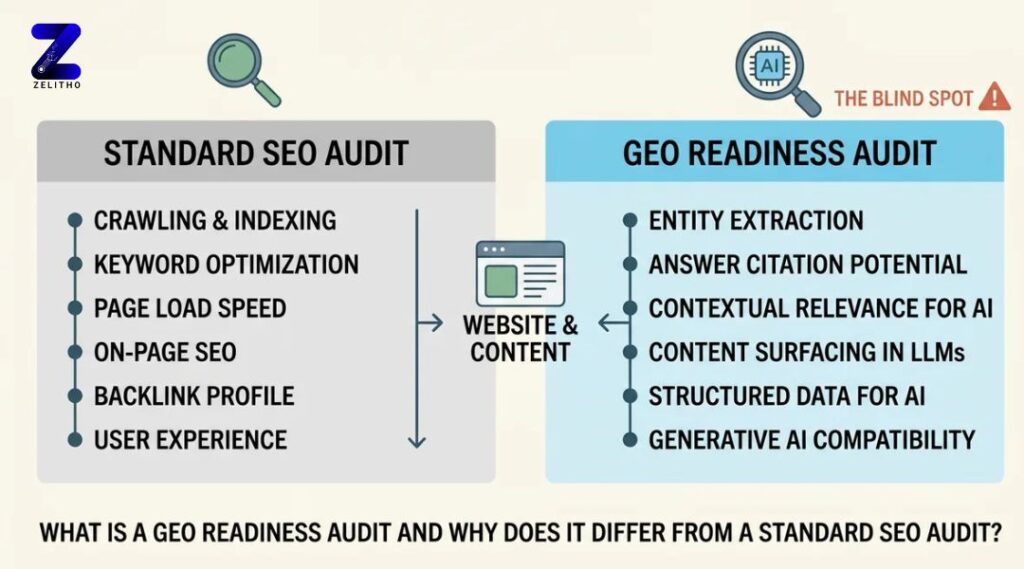

• Standard SEO audits test crawlability, page speed, and backlink profiles. They don’t test whether GPTBot can read your site, whether your answers surface in the first sentence, or whether AI systems can extract a clear entity definition from your pages. Those are different signals entirely.

• The GEO Readiness Stack is a two-layer audit system built for marketers running content at scale. It covers technical foundation first, content signal layer second, and closes with a monthly monitoring loop. Senior marketers, agency owners, and founders scaling content use it to appear in AI-generated answers, not just search result pages.

What Is a GEO Readiness Audit and Why Does It Differ from a Standard SEO Audit?

A GEO readiness audit tests whether AI search systems can extract, cite, and surface your content in generated responses. A standard SEO audit does not test for this. The two processes overlap in some areas but measure fundamentally different signals. If you’ve only run traditional audits, you have a blind spot, and it’s larger than you think.

Why Your SEO Audit Process Is Already Outdated (And What That Costs You)

You’re looking at your Google Analytics dashboard. Traffic is stable. Rankings look clean. Then someone on your team asks why a competitor with a lower domain authority keeps showing up in Perplexity answers for your core product category. You query it yourself. They’re right.

That moment is happening in marketing teams across every vertical right now.

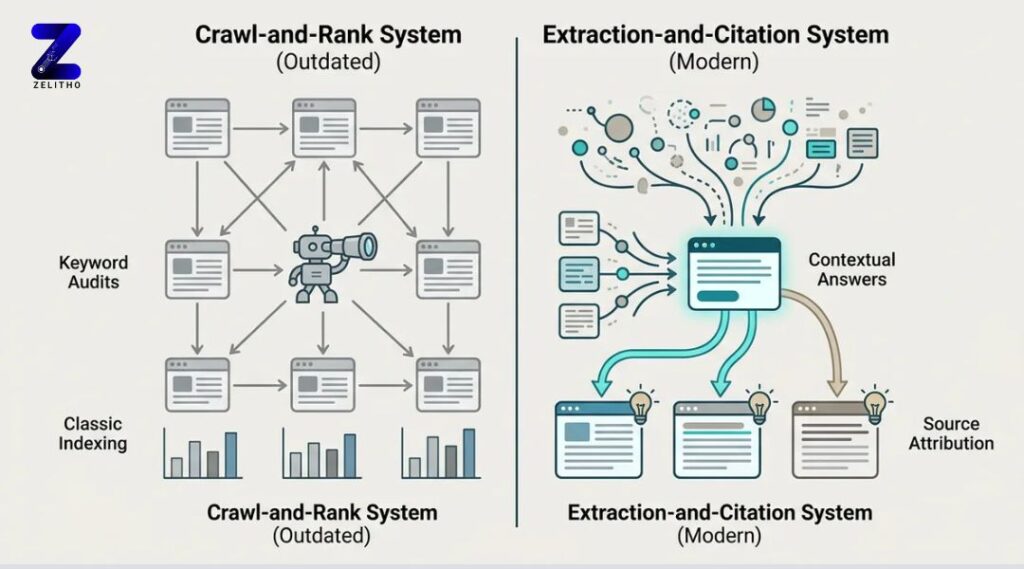

Two decades of established online discovery rules were built for crawl-and-rank systems, not extraction-and-citation systems. [1] The assumption baked into most audit frameworks is that if a page ranks, it gets found. That assumption no longer holds across all search surfaces.

Here’s the false assumption worth naming directly: strong Google rankings do not transfer to AI search visibility.

A page can hold a top-three Google ranking and be completely absent from ChatGPT, Perplexity, or Gemini responses on the same query. The systems operate differently. AI tools don’t rank pages. They extract structured answers and cite sources that meet their extraction criteria. If your content doesn’t meet those criteria, you don’t appear, regardless of your domain authority.

The cost is concrete. Run this test now: query your core product category across three AI tools. Use ten different prompt phrasings per tool. If your brand doesn’t appear in thirty consecutive responses, you have a citation deficit. That deficit represents category-level visibility your competitor may already be capturing.

Standard SEO audits don’t test for this. They check crawlability, page speed, canonical tags, and backlink profiles. None of those signals tell you whether GPTBot can read your content, whether your answers surface in the first 100 words, or whether an AI system can identify a clear entity definition on your page.

This is why the GEO Readiness Stack exists. It runs as a two-layer audit: a technical foundation layer and a content signal layer. Each layer targets signals that traditional audits ignore. The sections below walk through both.

Layer One: The Technical Foundation Audit (Weeks 1–2)

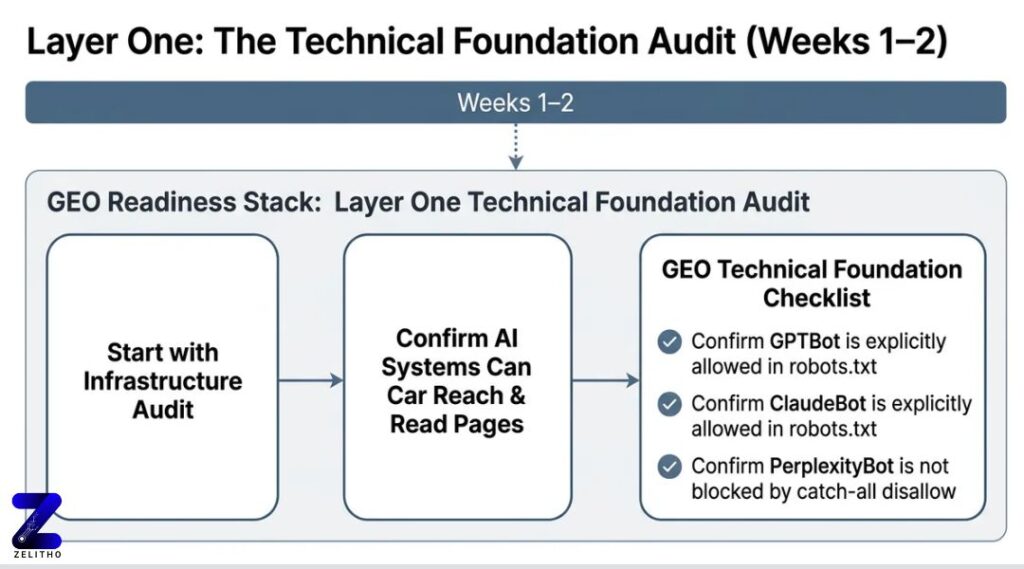

Start with infrastructure. Before any content restructuring makes sense, you need to confirm that AI systems can actually reach and read your pages.

The GEO Readiness Stack begins here. AI search audit phases span weeks one and two for technical foundation work. [2] This is not a standard technical SEO pass. The checklist is different.

GEO Technical Foundation Checklist:

- Confirm GPTBot and ClaudeBot are explicitly allowed in your robots.txt file

- Confirm PerplexityBot is not blocked by catch-all disallow rules

- Validate structured data markup: FAQ schema, HowTo schema, Article schema

- Audit heading hierarchies: each heading should answer a discrete question independently

- Confirm page-level entity clarity: every page establishes who, what, and why without ambiguity

- Test that your sitemap is current and includes your highest-traffic content pages

The robots.txt issue causes more invisible damage than most marketing teams realize.

A SaaS company added a catch-all disallow rule during a routine security patch. The intent was to block scraping bots. GPTBot got blocked in the same sweep. Six weeks later, their category pages stopped appearing in Perplexity answers. Their organic Google traffic was unchanged, so no alert fired. They never connected the cause until an audit caught it three months later.

That’s a fixable problem that sat open for a quarter.

Here’s what separates a GEO technical audit from a traditional one:

| Audit Area | Traditional SEO Checklist | GEO Technical Checklist |

|---|---|---|

| Bot access | Googlebot, Bingbot allowed | GPTBot, ClaudeBot, PerplexityBot explicitly allowed |

| Structured data | Schema for rich snippets | FAQ, HowTo, Article schema for extraction |

| Heading structure | Keyword placement in H1-H3 | Each heading answers a discrete standalone question |

| Entity clarity | Page topic relevance | Named entity with clear relationships defined on-page |

| Crawl priority | Core pages indexed | AI-relevant pages included in current sitemap |

If your robots.txt blocks any AI crawler, fix it today. Not next sprint. Today.

That’s not a roadmap item. It’s a zero-day correction. Every day that block stays live, those pages are invisible to systems that might otherwise cite them.

Layer Two: The Content Signal Audit , What AI Systems Actually Extract

Here’s the concern no one wants to say directly: the content library you’ve spent years building may already be invisible to AI systems. Not because the quality is poor. Because the formatting was built for human readers, not machine extraction.

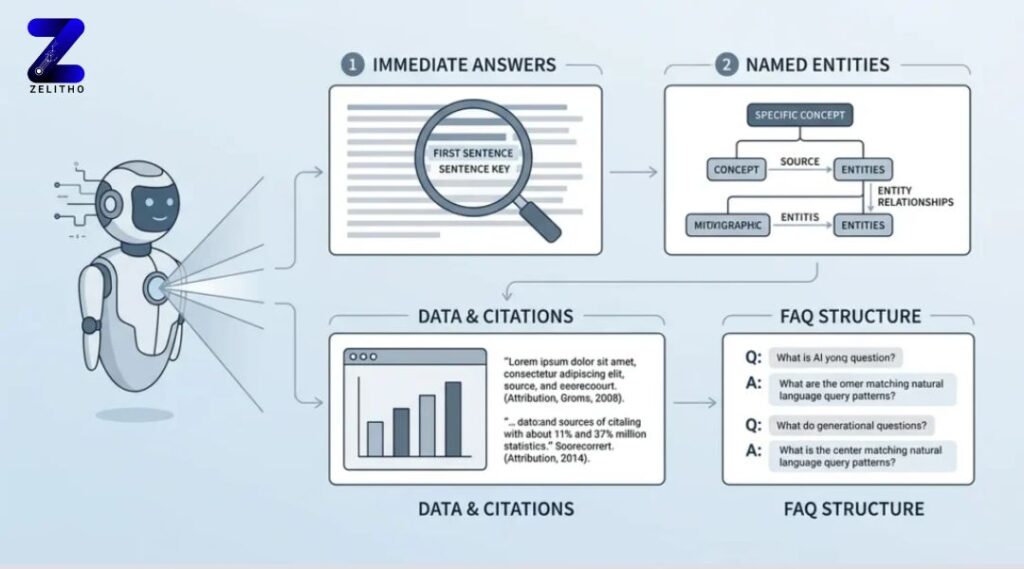

AI systems favor a specific content structure. They pull answers that appear in the first sentence of a section. They cite named entities with clear relationships. They reference statistics with source attribution. They surface FAQ-style structures that match natural language queries.

Over 500 brands have improved AI search performance by aligning content structure to extraction patterns. [2] The correction isn’t producing more content. It’s restructuring what already exists.

Stop writing for scroll depth. Start writing for extraction.

Long-form pillar pages with buried answers actively work against AI citability. The instinct to create a 3,000-word guide that covers every subtopic is understandable. But if the core answer appears at word 1,400, an AI system won’t surface it. It will surface a competitor who answered the same question in the first two sentences of a shorter page.

Reframe the approach: write for extraction first, depth second. Lead every section with a direct one-sentence answer. Then support it.

A financial services firm restructured its mortgage FAQ page using this approach. Each section began with a single sentence that answered the question completely. AI citation appearances for that page increased within 60 days of reindexing. The page didn’t get longer. It got cleaner.

Content Signal Audit: Five Criteria Per Page

Run these checks on your five highest-traffic content pages first:

- First-sentence answer: Does the section open with a direct answer, not a setup sentence?

- Named entity clarity: Are the key people, products, or organizations named and described, not referenced with pronouns?

- Cited statistics: Does any data point include an inline source attribution?

- FAQ structure: Are reader questions used as subheadings, not creative headers?

- Answer independence: Can each section be understood without reading the surrounding content?

If a page fails three or more of these criteria, restructure it before producing anything new. New content with the same formatting problems repeats the same invisible pattern.

The audit in layer two is not about word count, keyword density, or internal linking. Those metrics belong to the traditional audit. This layer asks a different question: if an AI system reads only this section, can it extract a citable answer?

If the answer is no, that section doesn’t exist in AI search results.

The Monitoring Loop: How to Know If Your GEO Audit Is Actually Working

Completing the two-layer audit is not the finish line. Without a monitoring loop, you can’t tell whether the structural changes you made actually shifted your citation frequency.

Most GEO audits fail here. The technical fixes get made. The content gets restructured. Then nothing gets measured, and three months later no one can say whether it worked.

Build a test question set of 20 to 30 queries that cover your core topics, product categories, and branded terms. These should be phrased as natural language questions, the way a buyer would actually ask them in ChatGPT or Perplexity.

Run that set across at least two AI platforms. Record every result.

The tracking format doesn’t need to be sophisticated. A simple spreadsheet works:

| Date | Query | AI Platform | Brand Cited | Competitor Cited |

|---|---|---|---|---|

| Month/Day | Exact prompt used | ChatGPT / Perplexity | Yes / No | Yes / No |

Run this monthly. Review it quarterly as part of a full audit cycle.

Monthly citation monitoring turns a one-time audit into a compounding system. You start to see patterns. A content restructure in week three shows up in citation data by week ten. A competitor’s new page starts appearing in prompts you used to own. A technical fix clears a bot block and citation frequency shifts within a few weeks.

Those signals are only visible if you’re tracking.

Define success clearly before you start. Within 90 days of completing the GEO Readiness Stack, your brand should appear in at least 40 percent of AI-generated responses to your test question set, for directly relevant queries. If you fall short of that threshold, the gap points to one of three problems:

- A technical blockage that wasn’t caught in the week-one-two audit

- A content structure problem on the pages covering that query topic

- A third-party citation deficit, meaning external sources don’t reference your brand on that topic

Each problem has a different fix. The monitoring data tells you which one you’re dealing with.

The operational habit is simple: block one hour per month to run your query set, update your tracker, and flag any prompts where a competitor appears and you don’t. Then adjust one content or technical element before the next review cycle. That cadence is sustainable. It doesn’t require a dedicated analyst. It requires consistency.

The GEO Readiness Stack Is a Quarterly Practice, Not a One-Time Fix

The GEO Readiness Stack, technical foundation first, content signal layer second, monitoring loop third, is not a one-time project. AI search systems update their extraction logic, add new sources, and shift citation weighting without announcements. The marketers who maintain an edge are the ones who audit on a schedule, not in response to a traffic drop. Run the technical checklist in weeks one and two. Restructure your highest-traffic content assets in weeks three and four. Install your monthly citation monitoring routine before the quarter closes. That sequence is repeatable. It compounds. And it works whether you’re operating a single-product startup or a multi-brand content operation. The window to build early GEO authority is open. It won’t stay that way.

Read more: How to Audit Your Site for GEO Readiness: A 30-Minute Checklist for AI Search Visibility

Sources

[1] https://www.ibeamconsulting.com/blog/seo-geo-audit-concepts-tools/

[2] https://www.getpassionfruit.com/blog/how-to-audit-your-website-for-ai-search-readiness-the-complete-geo-checklist